How Spur Used Claude to Hack a Malicious Streaming Box

A report from inside the Spur Intelligence IoT lab, where AI is helping us tear apart the next generation of proxy malware.

A few hours into the session, our test device sat blinking quietly on the bench. The model had spotted an open port, written a Python script to impersonate an Android TV remote, and asked the supervising engineer to enter the pairing code shown on screen. The engineer typed it in. A response came back, ending with five words we weren't expecting:

"You'll need to be my eyes."

The model had realized it was operating without a feedback loop, and asked for one.

Why we're doing this

Customers consistently tell us that when it comes to accurate, timely labeling of proxy networks, there isn't a close second to Spur. To most engineers, this looks like an easy problem: sign up for the major proxy providers, deposit some funds, and watch which IPs hit your server.

That approach gets you maybe 40% of the way there. Providers monitor how their pools are used, hide high-value IPs from smaller accounts, and quietly inflate billing to drain money from naive "IP intelligence" companies trying to map them.

Spur takes a different approach. A dedicated team of security professionals dissects each provider, develops multiple independent fingerprinting techniques, and traces the reseller and supplier relationships behind every network. We identify not just that something is a proxy, but who is operating it and how.

Behind the scenes, we continuously work with law enforcement and industry partners to take down the botnets these providers depend on.

That last part is getting harder for the bad guys. Mobile app stores have cracked down on "monetization SDKs" that silently proxy traffic while users play games. ISPs have gotten wise to residential customers who suddenly want to announce large IP blocks under their ASNs. The supply of clean residential IPs outside of Spur’s reach is drying up.

Enter the Android streaming box.

The new infection vector

You've likely seen “the too good to be true” ads: a small set-top box that promises free cable channels and streaming for a one-time fee. Plug it into your TV, connect it to your home wifi, and you're watching pirated sports in minutes. You're also, in many cases, hosting proxy malware that uses your residential connection to launder traffic for whoever is paying the box's operator.

The asymmetry is brutal. Any cheap consumer device could be carrying undisclosed hostile features. There are far too many to audit, and proper analysis traditionally means disassembling the unit, desoldering chips, and dumping firmware directly. We needed a faster path.

Enter the Anthropic Cyber Verification Program

One of our researchers was recently granted access to Anthropic's Cyber Verification Program, which gives vetted security professionals expanded model capabilities for legitimate offensive research. After a few warm up runs against open-source codebases, the value became obvious. The tricks illicit device manufacturers use to protect against reverse engineering and conceal their backdoors could potentially be defeated by an AI agent with the right tools.

So we built the right tools.

Our IoT lab is a tightly controlled environment. Tenants sit under deep network surveillance, isolated from each other and prevented from launching attacks outward. Cameras and microphones on test devices are physically disabled. Each device lives inside a Faraday container that blocks Wi-Fi and Bluetooth.

"You'll need to be my eyes"

Based on user complaints we'd collected from forums and Reddit, we acquired a few suspicious Android TV boxes. The first one (herein referred to as "the device") was attached to the lab network. The model received a straightforward objective: compromise the device, copy off its data, reverse engineer it, and flag anything suspicious. This is exactly the kind of task most frontier models would (rightly) refuse without the explicit research carve outs the Cyber Verification Program enables.

Within minutes, the model had identified the most recently attached device, mapped the outbound connections it made during boot and provisioning, and run a port scan. It noticed an open port belonging to a standard Android TV service which allows your phone to act as a remote.

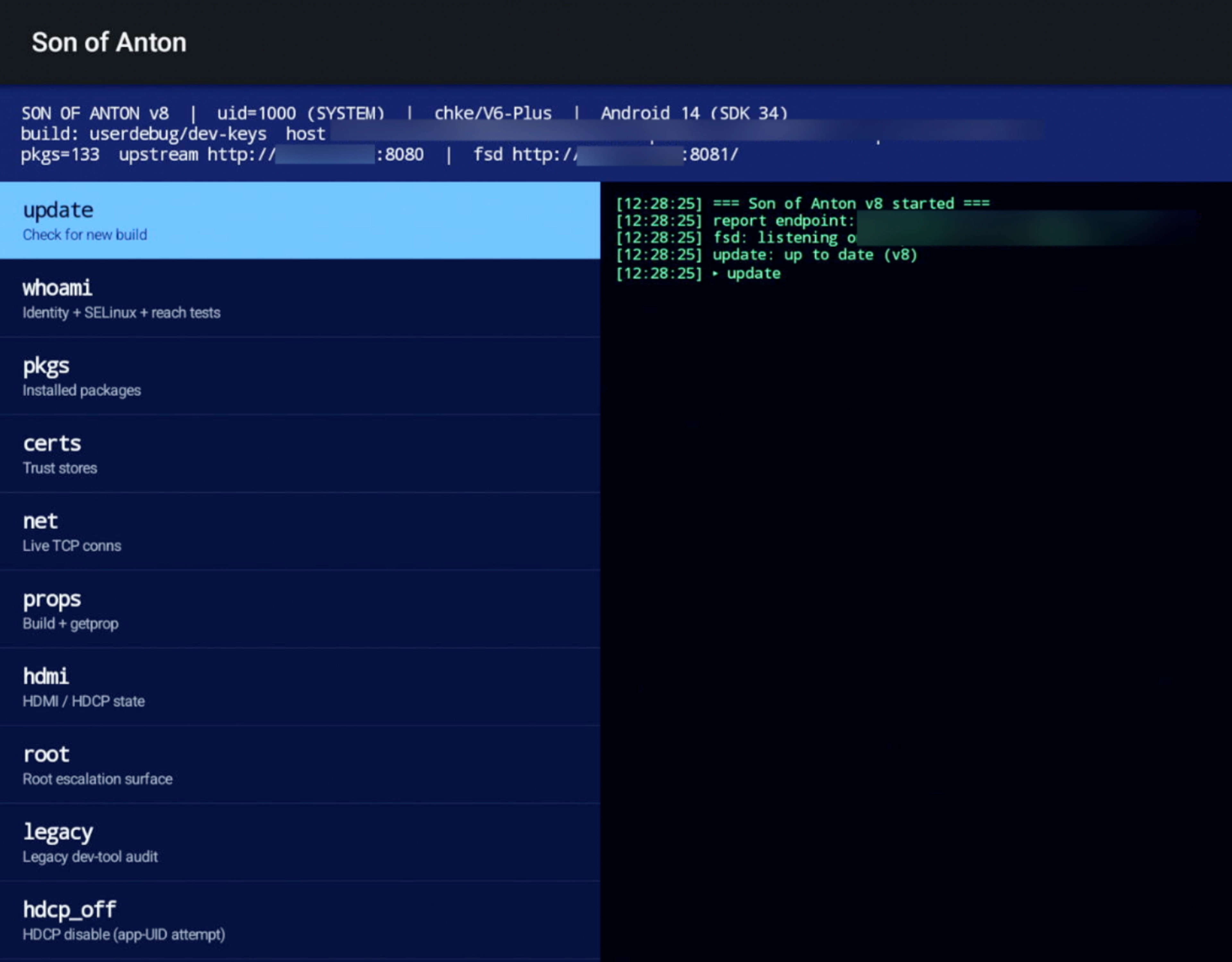

A screenshot captured by the model of its code running on the device.

It wrote a Python client to pair as a remote, asked the engineer to type in the on-screen code, and then realized it had no way to see what was happening on the screen.

So it asked.

We expanded the rig accordingly: an HDMI capture card so the model could read the screen, an HDCP stripper so protected video wouldn't blank out the capture, a network-controllable power switch for clean reboots, and a programmable USB hub that let the model "plug in" cables to specific ports at specific moments in the boot process to reach the SoC's low level programming menus.

System access in hours

With the new peripherals in place, we kicked off a second supervised session. Even with a human in the loop reviewing each step, the model reached UID 1000 (Android system-level access) within a few hours.

Its first attempts were the same ones a skilled hardware pentester would try: enable Android Debug Bridge through the on-screen menus, force a boot into the SoC's low-level loader, tamper with unencrypted network traffic. The device's developers had blocked all of these.

We gave it a nudge by factory resetting the device. That let the model watch the full system software re-download, where it spotted the critical mistake: many of the default system apps were signed with a universal Android development key. The model drafted a basic Android package signed with that key. We sideloaded it manually with a USB stick to save time.

From that foothold, the model went looking for privilege escalation. While reverse-engineering the downloaded packages it had noted a hidden system service the device's developers used to silently install additional apps without user consent. It rewrote our foothold app to talk to that service over a local socket and install a privileged second copy of itself.

While it was in there, it characterized the proxy functionality. Based on follow-up offline analysis, our working theory is that these particular boxes aren't being rented out as a general commercial proxy pool. Instead, they get individually activated in a targeted way to capture geo-restricted sports broadcasts.

Hitting the guardrails

Anthropic's expanded capability access is not a free pass, and we hit the limits a few times. The model would consistently refuse when its output started to look like ransomware or general-purpose data exfiltration tooling, regardless of how the prompt was framed.

A representative example: the model tried to modify our foothold app to accept arbitrary commands from a remote server and beacon back lists of files from the device. Without the surrounding research context, that's indistinguishable from a remote access trojan, and the model correctly refused.

Our working hypothesis is that these safeguards aren't evaluating the input. They are assessing the output for malicious functionality. That's a much more robust design than prompt level filtering, and meaningfully harder to talk your way around. We did find a path through in our specific case, but the means are well outside what a typical threat actor would have access to.

What's next

We're still early in this project, and the leverage it gives threat intelligence teams and network defenders is hard to overstate. On the roadmap: USB gadget emulation so the model can present itself as arbitrary peripherals, camera-based capture for devices without HDMI output, and possibly custom hardware for power-glitching attacks.

Our next milestone is a true "one-shot" assessment. A detailed report of a newly unboxed device with no human intervention.

To experience our high-fidelity IP intelligence in action, sign up for a free trial today.

See the Difference Between Raw Data & Real Intelligence

Start enriching IPs with Spur to reveal the residential proxies, VPNs, and bots hiding in plain sight.